TIME: You’re partnering with, among others, Harvard University on this. In an alternate Lady Gaga universe, would you have liked to have gone to Harvard?

Lady Gaga: I don’t know. I am going to Harvard today. So that’ll do.

— Belinda Luscombe, Time Magazine, March 12, 2012

There was a sense of déja-vu about the latest red meat scare and I thought that my previous post as well as those of others had covered the bases but I just came across a remarkable article from the Harvard Health Blog. It was entitled “Study urges moderation in red meat intake.” It describes how the “study linking red meat and mortality lit up the media…. Headline writers had a field day, with entries like ‘Red meat death study,’ ‘Will red meat kill you?’ and ‘Singing the blues about red meat.”’

What’s odd is that this is all described from a distance as if the study by Pan, et al (and likely the content of the blog) hadn’t come from Harvard itself but was rather a natural phenomenon, similar to the way every seminar on obesity begins with a slide of the state-by-state development of obesity as if it were some kind of meteorologic event.

When the article refers to “headline writers,” we are probably supposed to imagine sleazy tabloid publishers like the ones who are always pushing the limits of first amendment rights in the old Law & Order episodes. The Newsletter article, however, is not any less exaggerated itself. (My friends in English Departments tell me that self-reference is some kind of hallmark of real art). And it is not true that the Harvard study was urging moderation. In fact, it is admitted that the original paper “sounded ominous. Every extra daily serving of unprocessed red meat (steak, hamburger, pork, etc.) increased the risk of dying prematurely by 13%. Processed red meat (hot dogs, sausage, bacon, and the like) upped the risk by 20%.” That is what the paper urged. Not moderation. Prohibition. Who wants to buck odds like that? Who wants to die prematurely?

It wasn’t just the media. Critics in the blogosphere were also working over-time deconstructing the study. Among the faults that were cited, a fault common to much of the medical literature and the popular press, was the reporting of relative risk.

The limitations of reporting relative risk or odds ratio are widely discussed in popular and technical statistical books and I ran through the analysis in the earlier post. Relative risk destroys information. It obscures what the risks were to begin with. I usually point out that you can double your odds of winning the lottery if you buy two tickets instead of one. So why do people keep doing it? One reason, of course, is that it makes your work look more significant. But, if you don’t report the absolute change in risk, you may be scaring people about risks that aren’t real. The nutritional establishment is not good at facing their critics but on this one, they admit that they don’t wish to contest the issue.

Nolo Contendere.

“To err is human, said the duck as it got off the chicken’s back”

— Curt Jürgens in The Devil’s General

Having turned the media loose to scare the American public, Harvard now admits that the bloggers are correct. The Health NewsBlog allocutes to having reported “relative risks, comparing death rates in the group eating the least meat with those eating the most. The absolute risks… sometimes help tell the story a bit more clearly. These numbers are somewhat less scary.” Why does Dr. Pan not want to tell the story as clearly as possible? Isn’t that what you’re supposed to do in science? Why would you want to make it scary?

The figure from the Health News Blog:

|

Deaths per 1,000 people per year |

||

| 1 serving unprocessed meat a week | 2 servings unprocessed meat a day | |

| Women |

7.0 |

8.5 |

| 3 servings unprocessed meat a week | 2 servings unprocessed meat a day | |

| Men |

12.3 |

13.0 |

Unfortunately, the Health Blog doesn’t actually calculate the absolute risk for you. You would think that they would want to make up for Dr. Pan scaring you. Let’s calculate the absolute risk. It’s not hard.Risk is usually taken as probability, that is, number cases divided by total number of participants. Looking at the men, the risk of death with 3 servings per week is equal to the 12.3 cases per 1000 people = 12.3/1000 = 0.1.23 = 1.23 %. Now going to 14 servings a week (the units in the two columns of the table are different) is 13/1000 = 1.3 % so, for men, the absolute difference in risk is 1.3-1.23 = 0.07, less than 0.1 %. Definitely less scary. In fact, not scary at all. Put another way, you would have to drastically change the eating habits (from 14 to 3 servings) of 1, 429 men to save one life. Well, it’s something. Right? After all for millions of people, it could add up. Or could it? We have to step back and ask what is predictable about 1 % risk. Doesn’t it mean that if a couple of guys got hit by cars in one or another of the groups whether that might not throw the whole thing off? in other words, it means nothing.

Observational Studies Test Hypotheses but the Hypotheses Must be Testable.

It is commonly said that observational studies only generate hypotheses and that association does not imply causation. Whatever the philosophical idea behind these statements, it is not exactly what is done in science. There are an infinite number of observations you can make. When you compare two phenomena, you usually have an idea in mind (however much it is unstated). As Einstein put it “your theory determines the measurement you make.” Pan, et al. were testing the hypothesis that red meat increases mortality. If they had done the right analysis, they would have admitted that the test had failed and the hypothesis was not true. The association was very weak and the underlying mechanism was, in fact, not borne out. In some sense, in science, there is only association. God does not whisper in our ear that the electron is charged. We make an association between an electron source and the response of a detector. Association does not necessarily imply causality, however; the association has to be strong and the underlying mechanism that made us make the association in the first place, must make sense.

What is the mechanism that would make you think that red meat increased mortality. One of the most remarkable statements in the original paper:

“Regarding CVD mortality, we previously reported that red meat intake was associated with an increased risk of coronary heart disease2, 14 and saturated fat and cholesterol from red meat may partially explain this association. The association between red meat and CVD mortality was moderately attenuated after further adjustment for saturated fat and cholesterol, suggesting a mediating role for these nutrients.” (my italics)

This bizarre statement — that saturated fat played a role in increased risk because it reduced risk— was morphed in the Harvard News Letters plea bargain to “The authors of the Archives paper suggest that the increased risk from red meat may come from the saturated fat, cholesterol, and iron it delivers;” the blogger forgot to add “…although the data show the opposite.” Reference (2) cited above had the conclusion that “Consumption of processed meats, but not red meats, is associated with higher incidence of CHD and diabetes mellitus.” In essence, the hypothesis is not falsifiable — any association at all will be accepted as proof. The conclusion may be accepted if you do not look at the data.

The Data

In fact, the data are not available. The individual points for each people’s red meat intake are grouped together in quintiles ( broken up into five groups) so that it is not clear what the individual variation is and therefore what your real expectation of actually living longer with less meat is. Quintiles are some kind of anachronism presumably from a period when computers were expensive and it was hard to print out all the data (or, sometimes, a representative sample). If the data were really shown, it would be possible to recognize that it had a shotgun quality, that the results were all over the place and that whatever the statistical correlation, it is unlikely to be meaningful in any real world sense. But you can’t even see the quintiles, at least not the raw data. The outcome is corrected for all kinds of things, smoking, age, etc. This might actually be a conservative approach — the raw data might show more risk — but only the computer knows for sure.

Confounders

“…mathematically, though, there is no distinction between confounding and explanatory variables.”

— Walter Willett, Nutritional Epidemiology, 2o edition.

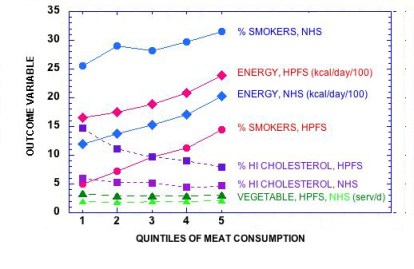

You make a lot of assumptions when you carry out a “multivariate adjustment for major lifestyle and dietary risk factors.” Right off , you assume that the parameter that you want to look at — in this case, red meat — is the one that everybody wants to look at, and that other factors can be subtracted out. However, the process of adjustment is symmetrical: a study of the risk of red meat corrected for smoking might alternatively be described as a study of the risk from smoking corrected for the effect of red meat. Given that smoking is an established risk factor, it is unlikely that the odds ratio for meat is even in the same ballpark as what would be found for smoking. The figure below shows how risk factors follow the quintiles of meat consumption. If the quintiles had been derived from the factors themselves we would have expected even better association with mortality.

The key assumption is that the there are many independent risk factors which contribute in a linear way but, in fact, if they interact, the assumption is not appropriate. You can correct for “current smoker,” but biologically speaking, you cannot correct for the effect of smoking on an increased response to otherwise harmless elements in meat, if there actually were any. And, as pointed out before, red meat on a sandwich may be different from red meat on a bed of cauliflower puree.

This is the essence of it. The underlying philosophy of this type of analysis is “you are what you eat.” The major challenge to this idea is that carbohydrates, in particular, control the response to other nutrients but, in the face of the plea of nolo contendere, it is all moot.

Who paid for this and what should be done.

We paid for it. Pan, et al was funded in part by 6 NIH grants. (No wonder there is no money for studies of carbohydrate restriction). It is hard to believe with all the flaws pointed out here and, in the end, admitted by the Harvard Health Blog and others, that this was subject to any meaningful peer review. A plea of no contest does not imply negligence or intent to do harm but something is wrong. The clear attempt to influence the dietary habits of the population is not justified by an absolute risk reduction of less than one-tenth of one per cent, especially given that others have made the case that some part of the population, particularly the elderly may not get adequate protein. The need for an oversight committee of impartial scientists is the most important conclusion of Pan, et al. I will suggest it to the NIH.

I’m always glad to see another of your posts in my emails. Thanks! One of the nice things about being old is that it is no longer possible to die prematurely. Bring on the bacon wrapped beef.

“I will suggest it to the NIH.” Keep us posted.

Thank you for pointing out the continuing disconnect between the data and the dietary recommendations. I’m beginning to think there’s some sleight of hand going on here. The truth is being shuffled about like the queen of hearts in a game of three card monte and eventually it will shown to the marks (us) as having been there all along and we simply had not the wit to see it. Here’s another piece of cognitive dissonance from Harvard:

“One highly-publicized report analyzed the findings of 21 studies that followed 350,000 people for up to 23 years. Investigators looked at the relationship between saturated fat intake and coronary heart disease (CHD), stroke, and cardiovascular disease (CVD). Their controversial conclusion: “There is insufficient evidence from prospective epidemiologic studies to conclude that dietary saturated fat is associated with an increased risk of CHD, stroke, or CVD…

…some of the media and blog coverage of these studies would have you believe that scientists had given a green light to eating bacon, butter, and cheese. But that’s an oversimplified and erroneous interpretation…

…Cutting back on saturated fat will likely have no benefit, however, if people replace saturated fat with refined carbohydrates—white bread, white rice, mashed potatoes, sugary drinks, and the like. Eating refined carbs in place of saturated fat does lower “bad” LDL cholesterol—but it also lowers the “good” HDL cholesterol and increases triglycerides. The net effect is as bad for the heart as eating too much saturated fat…”

http://www.hsph.harvard.edu/nutritionsource/what-should-you-eat/fats-full-story/

As bad? Didn’t they just tell me that there was insufficient evidence to conclude that dietary saturated fat was bad for the heart? If it’s not bad, how can something else be “as bad?” They at least get that the refined carbohydrates are bad – now. At least I think that’s what they’re trying to say, although one would be justified in thinking that they mean the opposite since the comparison is to something they admitted earlier was benign!

So maybe they’re planning to go from “saturated fat is bad,” to “saturated fat is bad even though it hasn’t been proven to be bad,” to “saturated fat isn’t bad but you still shouldn’t eat it just in case,” to “saturated fat isn’t bad – we never said it was” – and hoping nobody will notice the switch.

I like to draw analogy with courts of law. In science, as in the law, you cannot be found innocent, only not guilty. As i’ve suggested before, if you are found not guilty of child molestation, people will still not let you move into their neighborhood. Saturated fat should never have been indicted. Also, in nutrition, there is no double jeopardy or, the related concept in civil law — one of my favorite legal phrases — there is no collateral estoppel.

I like it when I have to look something up before noon! “Collateral estoppel” is a good analogy. Also, as Gary Taubes wrote in GCBC, (I thought he was quoting Walter Willet but I can’t find the passage), it takes a huge amount of evidence to overturn the conventional wisdom even though it didn’t take much to establish it.

I guess what’s going on in all these blogs is a sort of Innocence Project for saturated fat!

Awesome, as always. Love your takedowns.

What about neu5gc? Maybe it’s why red meat consistently shows up in nutrition studies as bad for us. Not saturated fat, not cholesterol (which I agree are only bad for you if you’re also consuming lots of carbs). I wish someone in the low-carb community would take a serious, open-minded look at neu5gc. It might just be the elephant in the low-carb living room.

I don’t know much about neu5gc but I think right now it has a long way to go before reaching elephant status.

“Maybe it’s why red meat consistently shows up in nutrition studies as bad for us.”

It may be commonly asserted to be “bad for us” but that is not the same as consistently showing up as bad for us 😉

Just so.

You know what they say about terrorism: Follow the money. Clearly there are some mega-dollars behind the scare tactics,even if the money is funneled through a govt agency, and Harvard (my alma mater I will add) is not immune to the dollar-disease.

What? Harvard, dollar-disease? Do you think?

I wonder if there is an independent meat producers association who would be willing to put up money for research into this issue? If there were such a body I could see they would have much to gain… although I suspect that nowadays we are looking at big multinationals, that have a finger in every kind of food pie ;-(

I offer this quote from Vilhjalmur Stefansson’s Adventurers in Diet…

“In 1920 … One of the Mayo brothers suggested that I spend two or three weeks there to have a check-over and see whether they could not find evidences of the supposed bad effects of meat. I wanted to do this but commitments in New York prevented.

Then one day while talking with the gastro-enterologist Dr. Clarence W. Lieb, I told him of my regret that I had not been able to take advantage of the Mayo check-over. Lieb said there were good doctors in New York, too, and volunteered to gather a committee of specialists who would put me through and examination as rigid as anything I could get from the Mayos.

The committee was organized, I went through the mill, and Dr. Lieb reported the findings in the Journal of the American Medical Association for July 3, 1926, “The Effects of an Exclusive Long-Continued Meat Diet.” The committee had failed to discover any trace of even one of the supposed harmful effects.

With this publication the Lieb and Pearl events merge. For when the Institute of American Meat Packers wrote asking permission to reprint a large number of copies for distribution to the medical profession and to dietitians, Lieb, Pearl and I went into a huddle. The result was a letter to the Institute saying that we refused permission to reprint, but suggesting that they might get something much better worth publishing, and with right to publish it, if they gave a fund to a research institution for a series of experiments designed to check, under conditions of average city life, the problems which had arisen out of my experiences and views. For it was contended by many that an all-meat diet might work in a cold climate though not in a warm, and under the strenuous conditions of the frontier though not in common American (sedentary) business life.

We gave the meat packers warning that, if anything, the institution chosen would lean backward to make sure that nothing in the results could even be suspected of having been influenced by the source of the money. …”

The National Cattlemen’s Beef Association is such an organization but they are scientifically backward. They are afraid of saturated fat. They would not fund my grant to help African-American’s with type 2 Diabetes because they are afraid of the words “low-carb” and are afraid of fat. More commonly used than the original of which it is a parody is Pogo’s comment: we have met the enemy and he is us.

I am daily astonished by people who work against–or do nothing to advance– their own best interests; apparently organizations are prone to this, too. Fear is powerful.

I am not sure what this refers to but I agree with the observation.

“Good” HDL cholesterol may not protect heart after all, study suggests:

http://www.cbsnews.com/8301-504763_162-57436495-10391704/good-hdl-cholesterol-may-not-protect-heart-after-all-study-suggests/

Doubt Cast on the ‘Good’ in ‘Good Cholesterol’:

http://www.nytimes.com/2012/05/17/health/research/hdl-good-cholesterol-found-not-to-cut-heart-risk.html

I was referencing your comment on the Cattlemen’s Association. Meaning one would think they would be getting behind Taubes, et al.

Never mind Taubes. One would think that they would fund our attempt to help African-Americans with type 2 diabetes especially since the beef hot dog is the national food of Brooklyn. I think they are seeing the light and I think that they are funding Jeff volek’s research.

[…] Crimson Slime – Making Americans Afraid of Meat. – Dr. Feinman is a little late to the party on this one, but it’s a good read anyhow. […]

Given the consistency of the findings in observational studies around the world, one would like to see hard end point RCTs done. I’m intrigued about the phenomenon and despite your well-grounded critics, think there is something more into this.

Think of the effect of red meat on kidney function. Red meat is shown to associate with a decline in kidney function in observation studies 1.usa.gov/KC1vNX . Not only observational, but also randomized short term trials in diabetes have shown that red meat worsens albuminuria 1.usa.gov/K0Sdxf & 1.usa.gov/KHJfmG Actually leaving red meat out of their diet, type 2 diabetics can reap the benefits similar standard drug treatment in kidney disease (ie. ACE-inihibitor) 1.usa.gov/KszBFE This makes me believe there might more than just confounders in to the story.

But who knows. At least, I’m very interested in any randomized data on red and processed meat that would show opposite, ie. protective effects, in cardio-renal diseases. Until such data emerges, perhaps we should have eyes open for neu5gc (thanks Donna), nitritites, hemi iron, BPAs in plastic or anything.

Hopefully this would be a long term trial, more than 2 years

These links don’t work. It all sounds like going out of our way to find something. If a two year study doesn’t work, we will use NIH money for a 5 year study and so on out to the crack of doom. Since you get benefits from carbohydrate restriction in two weeks, maybe it would be better to pursue that angle.

I hope the links work if you write them like this: http://1.usa.gov/KC1vNX, http://1.usa.gov/K0Sdxf & http://1.usa.gov/KHJfmG The topic was red meat, I find the links rather relevant in respect to cardiorenal syndrome.

So, I’m wondering — if red meat is unhealthful and white meat is less so, what is it about red meat that’s different from white meat? What are the specific nutrients in red meat that are supposed to be harmful? All I think of when I think of red meat is good nutrition in a small package.

Judging from the following web site, the matter is not black and white (sorry). Whether meat is red or white simply depends on how the muscles were used:

http://www.exploratorium.edu/cooking/meat/INT-what-meat-color.html

I think the high concentration of mitochondria also contributes to the color (the cytochromes have same general structure as myoglobin). As for what it is about red meat, I think the post has a pretty good explanation:

What is the mechanism that would make you think that red meat increased mortality. One of the most remarkable statements in the paper:

“Regarding CVD mortality, we previously reported that red meat intake was associated with an increased risk of coronary heart disease(2, 14)and saturated fat and cholesterol from red meat may partially explain this association. The association between red meat and CVD mortality was moderately attenuated after further adjustment for saturated fat and cholesterol, suggesting a mediating role for these nutrients.” (my italics)

This bizarre statement that saturated fat played a role in increased risk because it reduced risk was morphed in the Harvard News Letters plea bargain to “The authors of the Archives paper suggest that the increased risk from red meat may come from the saturated fat, cholesterol, and iron it delivers” although the blogger forgot to add “…although the data show the opposite.” Reference (2) cited above had the conclusion that “Consumption of processed meats, but not red meats, is associated with higher incidence of CHD and diabetes mellitus.” In essence, the hypothesis is not falsifiable — any association at all will be accepted as proof.

This is, as I pointed out before, is upside-down science. It used to be science led to recommendations. What we have here is recommendation-driven science. A recommendation is made and then the science sets out to justify it. It is very sad.

“It is very sad.”

It is very sad, indeed. Even sadder is all the publicity this thing got. After taking another look at the Washington Post article, and doing a little poking around about Rashmi Sinha, I have the impression the article is little more than a thinly-veiled vegetarian/vegan/animal rights propaganda piece. The last two paragraphs have little to do with health:

http://www.washingtonpost.com/wp-dyn/content/article/2009/03/23/AR2009032301626.html

“In addition to the health benefits, a major reduction in the eating of red meat would probably have a host of other benefits to society, Popkin said: reducing water shortages and pollution, cutting energy consumption, and tamping down greenhouse gas emissions — all of which are associated with large-scale livestock production.

“There’s a big interplay between the global increase in animal food intake and the effects on climate change,” he said. “If we cut by a few ounces a day our red-meat intake, we would have big impact on emissions and environmental degradation.”

Rashmi Sinha’s lecture on “Meat and Cancer” is promoted on Harvard vegetarian/vegan sites, and while that doesn’t prove anything, an article she coauthored on “Cancer Risk and Diet in India” states that “vegetarian diets. . . have been significantly associated with reductions in cancer.”

Click to access scg_written_11.pdf

It appears that not only was the study flimsy to begin with, since it depended on self reporting of thousands of people over a decade, it started out with a definite bias.

I’m not an expert in this area, but I suspect that cancer and its association (strong or otherwise) with red meat is anywhere near as big a health problem in India as diabetes which is not being treated in the ways that we might recommend.

Ronald Krauss on red meat and saturated fat http://bit.ly/M03LQR (podcast and transcript)

Ronald Krauss has found some evidence against high amounts of red meat (during a low carb diet). Interestingly, it is just one small clinical study he refers to. Mangravite et al. 2011

Two things about Ron Krauss: he talks about lowering carbohydrate but never cites other researchers and the numerous papers supporting low carb strategies. Second, his idea of low carb is 26 % carbohydrate because that is what the AHA says is the minimum..

I have not read the study but the dialogue shows that the scientific facts are pretty much irrelevant…..

RON KRAUSS

Apo-B is … a whole lot more accurate at predicting risk for heart disease, than just measuring the total amount of cholesterol itself. This is being acknowledged more widely in the first of heart disease assessment.

Has anybody told the American Heart Association this?

RON KRAUSS

The AHA, the American Heart Association, and I’ve served on their advisory board, the AHA, does two things It promotes scientific research and it makes statements from time to time.

But their policy statements don’t reflect the importance of counting LDL particles yet.

RON KRAUSS

No There’s still a lot of debate about whether we should be advancing beyond the old LDL “total” cholesterol risk assessment, to understanding it based on particle concentrations, but I think we’re moving in the right direction.

Translation: Scientific truth is not as important as serving on their advisory board…so never mind about your health. I and the AHA will tell you when you can follow the science. Moving in the right direction? Moving towards the truth? “promotes scientific research?”

This is pretty grim stuff.

apoB is finally being recognised in the 2009 Canadian Lipid Guidelines

Click to access 2009_dyslipidemia-guidelines.pdf

“LDL-C therefore continues to constitute the primary target of

therapy; the alternate primary target is apoB.” with a target set for apoB of “< 0.80 g/L"

BUT my specialist (an Endocrinologist) here in Nova Scotia, has told me that she is not even authorised to order the test yet!

I think a good approximation is TAG/HDL, the cut-off point for which is 3.5. Anything below is considered good.

Thanks… my latest Trigyclerides/HDL-C ratio was 1.0 (Trigs 77.06 and HDL-C 74.14) so despite dire warnings from my Doctor, I’m still ignoring the “high” LDL-C 😉

What if you read the study?

Well, I found A 3 week study with 31 % carbohydrate shows small changes with large variation. I am not sure about what I am supposed to make of this. What do you think about the study?

I also think it’s small and short, but you may want to pay attention to the fact that findings are in line with cohort studies. The results indicate or at least make you speculate that, for a reason or for another, red meat might be the modifying factor determining if saturated fats (from dairy) are atherogenic or not. The study also shows that it’s healthy to use vegetable oils in place of dairy fat while on meat based low carb diet. As authors conclude “…reductions in the other lipoprotein-related risk factors, including apo B and small LDL, were greatest following consumption of a Low Carbohydrate Low Saturated Fat Diet” If I remember right, Krauss et co. will run another clinical trial to see if they can confirm and expand the findings. But of course, it’s up to everyone’s own choice, dismiss or scrutinize the results of the study. Real scientists will always love to learn something new.

One reason why red meat might be aterogenic are the AGE products that are formed when meat is prepared. Just a guess.

Thanks. It’s always good to have a definition of a real scientist and I certainly hope to learn something new from Krauss’s next clinical trial.

This is the right blog for anyone who wants to find out about this topic. You understand so much its almost exhausting to argue with you (not that I really would want…HaHa). You positively put a brand new spin on a subject thats been written about for years. Nice stuff, simply great!

Flattery will get you everywhere.

I am reading your book and enjoying it. A bit complex for a non-science person, but I am getting the gist. I appreciate your efforts to help us all understand the sorry state of nutritional “science” better. A real eye-opener.

Thanks. I am glad you like it.