“Doctors prefer large studies that are bad to small studies that are good.”

— anon.

The paper by Foster and coworkers entitled Weight and Metabolic Outcomes After 2 Years on a Low-Carbohydrate Versus Low-Fat Diet, published in 2010, had a surprisingly limited impact, especially given the effect of their first paper in 2003 on a one-year study. I have described the first low carbohydrate revolution as taking place around that time and, if Gary Taubes’s article in the New York Times Magazine was the analog of Thomas Paine’s Common Sense, Foster’s 2003 paper was the shot hear ’round the world.

The paper showed that the widely accepted idea that the Atkins diet, admittedly good for weight loss, was a risk for cardiovascular disease, was not true. The 2003 Abstract said “The low-carbohydrate diet was associated with a greater improvement in some risk factors for coronary heart disease.” The publication generated an explosive popularity of the Atkins diet, ironic in that Foster had said publicly that he undertook the study in order to “once and for all,” get rid of the Atkins diet. The 2010 paper by extending the study to 2 years would seem to be very newsworthy. So what was wrong? Why is the new paper more or less forgotten? Two things. First, the paper was highly biased and its methods were so obviously flawed — obvious even to the popular press — that it may have been a bit much even for the media. It remains to be seen whether it will really be cited but I will suggest here that it is a classic in misleading research and in the foolishness of intention-to-treat (ITT).

Second, the zeitgeist had changed from 8 years before. (Not for the better). Science no longer entered into it. The USDA, the NIH and the private health agencies now simply write anything they want and nobody objects. Low-fat is still the name of the game and plays a big part in the USDA guidelines and hence in the school lunch program of the Healthy Hunger-Free Kids Act endorsed by Michelle Obama and a progression of suits with maudlin expressions of optimism. There are numerous disclaimers about good fats, bad fats so that you can’t really hold them to anything but the Guidelines for Americans made it clear that there is a State Diet and you can talk about other stuff all you want as long as you don’t expect to be funded by any government agency or as long as you don’t anticipate any recognition. Anyway, the Foster study.

There are a lot of odd twists and turns in this study, but getting right to the results, Figure 2 is labeled “Predicted absolute mean change in body weight for participants ….” Predicted ? That sounds strange. What about the data? The figure shows no difference in change in body weight between the low carb diet and the low fat diet. Well, it happens. Usually, the low-carb diet does better but no guarantee of that. Figure 3, however, shown below, indicates changes in triglycerides for the 3, 6, 12 and 24 month time periods. Now reduction in triglycerides is virtually the hallmark of low carbohydrate diets and the big difference in reductions on the two diets seen after 3 or 6 months (shown by the arrow) is the usual result when comparing low-carbohydrate and low-fat diets but, in this case they actually come together after 24 months. How is that possible.

This seemed strange so I realized I had to find out where “predicted” came from and that meant reading the Methods section and particularly the Statistical Analysis section on how the data had been handled. You rarely read these sections unless you think there is a problem. Large studies, like this usually have a statistician and they use standard methods whose details may or may not be understood by a non-statistician (or the authors for that matter). As I kept reading the statistical section, I found it increasingly tedious and hard to read until I hit this passage (I’ve highlighted the key words):

“The previously mentioned longitudinal models preclude the use of less robust approaches, such as fixed imputation methods (for example, last observation carried forward or the analysis of participants with complete data [that is, complete case analyses]). These alternative approaches assume that missing data are unrelated to previously observed outcomes or baseline covariates, including treatment (that is, missing completely at random).”

What’s going on here? In a nutshell, they used “data” from people who dropped out of the experiment. To do this, all they had to do was “assume that all participants who withdraw would follow first the maximum and then minimum patient trajectory of weight.” Whatever this means, if anything, the key words are “withdraw” and “assume.” In other words, this is a step beyond intention-to-treat where you would include, for example, the weight of people who showed up to be weighed but had not actually followed one or another diet. Here there is no data. A pattern of behavior is assumed and data is — let’s face it — made up. Insofar as this is appropriate the results could, in theory, be fit to a model for a three year study, or a ten year study, or whatever since the experiments didn’t actually have to be performed. These experiments are expensive; think of the money that could be saved if we could work only with “predicted” data. Which makes one wonder: who funded this kind of research?

So this is an extreme kind of intention-to-treat which limits the kind of conclusions you can draw.

It is odd that ITT is controversial it is so blatantly foolish, but a reasonable way to deal with potential disagreement is simply to publish both the ITT data and the data that includes only the compliers, the so-called “per protocol” group. This is what was done in the Vitamin E study described in the last post on this subject. This data is missing from Foster’s paper. So, where is the data? One thing that caught my eye was the statement at the end of the article: “Data set: Available from Dr. Foster … subject to study group approval and National Institutes of Health policy.” When I tried to get the dataset, Dr. Foster assured me that they were still planning future publications. We’ll see.

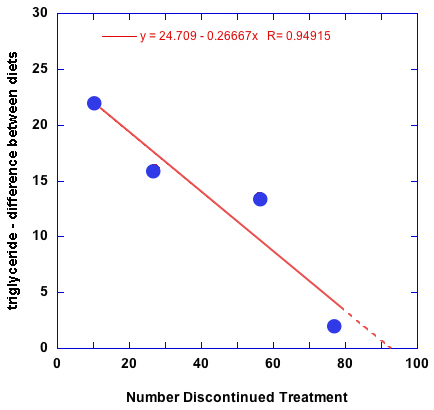

So, was the decline in performance due to including the made-up data from the drop outs? One way to get an idea of whether that is true is, for each time point, plot the number of people who discontinued treatment against triglycerides at that time point.

Since we are suspicious of the idea that triglycerides lowering was the same on both low-fat and low-carbohydrate arms, we plot the difference between the two values (the double-headed arrow in Figure 3, above). The results shown above, indicate a direct correlation, that is the more people who dropped out, the more the two measurements were similar. In other words, the fact that, as the authors put it, “Decreases in triglyceride levels were greater in the low-carbohydrate than in the low-fat group at 3 and 6 months but not at 12 or 24 months” were almost surely due to the fact that the differences were diluted by people who weren’t on the low-carbohydrate diet; and ITT or whatever this was, always makes the better diet look worse than it is.

It gets worse. The Discussion:

“Our study has 2 main findings. First, neither dietary fat nor carbohydrate intake influenced weight loss when combined with a comprehensive lifestyle intervention. Second, because both diet groups achieved nearly identical weight loss, we were able to determine that a low carbohydrate diet has greater beneficial long-term effects on HDL cholesterol concentrations than a low-fat diet.” (my emphasis)

The first sentence gets to the heart of the matter. It is, after all, what we want to know. Is one macronutrient better or worse than another? So what were the dietary intakes? Well, it’s not in the paper. The paper is quite long with a tedious Appendix of the lifesyle intervention but I read it carefully. I really did. The data weren’t there. I was going to write to the authors when I found out, I think through somebody’s blog, maybe Tom Naughton’s, that the article had been covered in a story in the Los Angeles Times and as reported by Bob Kaplan:

“Of the 307 participants enrolled in the study, not one had their food intake recorded or analyzed by investigators. The authors did not monitor, chronicle or report any of the subjects’ diets. No meals were administered by the authors; no meals were eaten in front of investigators. There were no self-reports, no questionnaires.

The lead authors, Gary Foster and James Hill, explained in separate e-mails that self-reported data are unreliable and therefore they didn’t collect or analyze any.”

I confess to feeling a bit betrayed. I don’t like getting scientific information from the LA Times. (I know. How long have I been in this business?) How can you say “neither dietary fat nor carbohydrate intake influenced weight loss” if you haven’t measured fat or carbohydrate? I guess if you can “impute” data, you can make up the conclusion but it seems blatantly dishonest. No? Well, we don’t want to accuse anybody in light of the second factor that I mentioned at the beginning of this post: the state of mind of the nutritional world. The science simply doesn’t count and there is an accepted low-fat dogma. From this perspective, what would happen if the authors reported the facts as they found them or, more important, if they measured the relevant data, if they asked what people eat, as Kaplan put it “the single most important question … that any reasonably intelligent high school student would ask?” In short, if they tried to give real information about low carbohydrate diets, is there any chance they would be funded again? In other words, we don’t know what the authors were thinking but given the pervasive state of nutritional policy, is it not asking a lot to fight City Hall?

City Hall, having failed to show any risk of low-carbohydrate diets, indeed witnessing studies that show only benefit and having spent hundreds of millions on studies that showed no benefit of low-fat diets have fallen on the strategy of saying that, well, none of it counts. All macronutrients are irrelevant, only calories count. So who funded this kind of research?

“Washington University (grant UL1 RR024992); Temple University (grant R01 AT1103); University of Pennsylvania (grant UL1RR024134); University of Colorado (grant UL1 RR000051); and the National Center for Research Resources, a component of the National Institutes of Health (DK 56341), to Washington University Clinical Nutrition Research Unit,”

that is, the NIH and while, I am sure that it is true that “The funding source had no role in the design, conduct, or reporting of the study,” something is wrong here.

[…] al: Weight and Metabolic Outcomes After 2 Years August 28, 2011By: rdfeinman Read the Full Post at: Richard David Feinman “Doctors prefer large studies that are bad to small studies that are good.” – […]

Dr. Feinman,

Very interesting, and compelling work!

So, if I got this right, in this Foster study, if a low fat dieter dropped out of the trial, his/her weight and other data would be assumed to be the same as the mean weight and other data, month by month for all patients (both low fat and low carb) for the remainder of the study? If this is true it would, as you indicate degrade the performance of the best diet and improve the performance of the worst diet.

I’d like to spread the word on your work here but want to get it right first. Thanks!

…Joe…

If a patient dropped out, their last data point is extended to the next point that would have been measured according to a rate of change that depends on performance of their prior behavior or the behavior of the group. The exact method is not clear; there is a certain amount of statistical double-talk here. In fact, the first paper in 2003 used a similar method of carrying the last data point forward but there, they also showed the data for just the people who stayed in the study. Similar procedure was followed in a paper by Stern, et al. (Ann Intern Med 2004, 140(10):778-785) who also presented data for just compliers. The comparison is shown in my ITT paper: http://www.nutritionandmetabolism.com/content/pdf/1743-7075-6-1.pdf. The statement that you always hear that the diets are the same at one year may depend on which method of analysis is used. The fact that other people use this method does not make it any more reasonable.

Governments are in a box. They need a grain-based, high-carb diet to work because what else is there to feed the masses but grain? It doesn’t work for a lot of people, who get fatter and fatter and/or develop diseases such as diabetes and hypertension. Individuals can try something else, at least if they have the money, but from the governmental/ global view point, what’s for dinner is grain and more grain. I expect leaders to keep trying to pound that square peg into that round hole. They need the peg to fit, so they will just pound harder and harder.

It’s hard to know who “they” are. The part of the government that I am concerned with is the NIH and related agencies that will only fund studies that uphold the round hole.

I have a different take on the triglyceride issue. I don’t see it as much a function of made-up data, but more an indication that the “atkins” group wasn’t on the plan anymore. Of course, without any real data (either the actual food or the actual POUNDS LOST, as the paper promises), it’s hard to tell.

Also, it is my belief that the lack of interest in this paper is due to the lack of interest in low carb in general. “Atkins is dead now, and we don’t need to worry about all that anymore.” The take-home is that the diet plans are the same, and there is no mention at all of those pesky lipid values. I think the lack of interest may also be pointing more to the strength of the low-fat message than on the validity of the study. Goodness! The quality of a study hasn’t seemed to affect whether it has been cited in other instances (of course, I have no “r” to back up my statement.)

Here’s my take on the paper: http://exceptionallybrash.blogspot.com/2011/03/fosters-pounds-lost-study.html

I agree. That the diets were becoming the same with time is certainly a factor as it always is in these studies. Of course, you can’t find this out until you actually look at the compliers. I think the “Atkins is dead” is also part of the story but the more insidious part of the story is that the lipophobes are now trying to back into low-carb and, naturally, will not cite any work not done by their friends. My own perception is that the field now has total chaos and you can say whatever you want. Peer review is virtually meaningless and that is why I think some kind of government intervention is needed. It is surely possible to find scientists who are not tied to nutritional policy who could evaluate current literature and give the population some real information. Better use of government power than giving kids ration cards for sugar. I did like your take on this and I was probably influenced by other bloggers who also did a good job on this. What is amusing is that the authors did not record food intake because it is too unreliable but they consider the made-up numbers as hard data.

You nail it in that last sentence: “What is amusing is that the authors did not record food intake because it is too unreliable but they consider the made-up numbers as hard data.”.

I dont’t know if many of you know Spanish PREDIMED-Reus trial. It is very interesting study on primary prevention of type 2 diabetes. The results are great for Mediterranean diet and bad for “low fat” diet. But again very incomplete data on dietary intakes. What’s going on? (at least they have some data on compliance).

http://care.diabetesjournals.org/content/early/2010/10/12/dc10-1288