“In the Viking era, they were already using skis…and over the centuries, the Norwegians have proved themselves good at little else.”

–John Cleese, Norway, Home of Giants.

With the 3-foot bookshelf of popular attacks on the low-fat-diet-heart idea it is pretty remarkable that there is only one defense. Daniel Steinberg’s Cholesterol Wars. The Skeptics vs. The Preponderance of Evidence is probably more accurately called a witness for the prosecution since low-fat, in some way or other is still the law of the land.

The book is very informative, if biased, and it provides an historical perspective describing the difficulty of establishing the cholesterol hypothesis. Oddly, though, it still appears to be very defensive for a witness for the prosecution. In any case, Steinberg introduces into evidence the Oslo Diet-Heart Study [2] with a serious complaint:

“Here was a carefully conducted study reported in 1966 with a statistically significant reduction in reinfarction [recurrence of heart attack] rate. Why did it not receive the attention it deserved?”

“The key element,” he says, “was a sharp reduction in saturated fat and cholesterol intake and an increase in polyunsaturated fat intake. In fact. each experimental subject had to consume a pint of soybean oil every week, adding it to salad dressing or using it in cooking or, if necessary, just gulping it down!”

Whatever it deserved, the Oslo Diet-Heart Study did receive a good deal of attention. The Women’s Health Initiative (WHI), liked it. The WHI was the most expensive failure to date. It found that “over a mean of 8.1 years, a dietary intervention that reduced total fat intake and increased intakes of vegetables, fruits, and grains did not significantly reduce the risk of CHD, stroke, or CVD in postmenopausal women.” [3]

The WHI, adopted a “win a few, lose a few” attitude, comparing its results to the literature, where some studies showed an effect of reducing dietary fat and some did not — this made me wonder: if the case is so clear, whey are there any failures. Anyway, it cited the Oslo Diet-Heart Study as one of the winners and attributed the outcome to the substantial lowering of plasma cholesterol.

So, “cross-examination” would tell us why, if “a statistically significant reduction in reinfarction rate” it did “not receive the attention it deserved?”

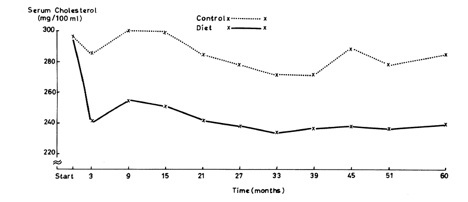

First, the effect of diet on cholesterol over five years:

The results look good although, since all the numbers are considered fairly high, and since the range of values is not shown, it is hard to tell just how impressive the results really are. But we will stipulate that you can lower cholesterol on a low-fat diet. But what about the payoff? What about the outcomes?

The results are shown in Table 5 of the original paper: Steinberg described how in the first 5 years: “58 patients of the 206 in the control group (28%) had a second heart attack” (first 3 lines under first line of blue-highlighting) but only

“… 32 of the 206 in the diet (16%)…” which does sound pretty good.

In the end, though, it’s really the total deaths from cardiac disease. The second blue-highlighted line in Table 5 shows the two final outcome. How should we compare these.

1. The odds ratio or relative risk is just the ratio of the two outcomes (since there are the same number of subjects) = CHD mortality (diet)/ CHD mortality control) = 94/79 = 1.19. This seems strikingly close to 1.0, that is, flip of a coin. These days the media, or the report itself, would report that there was a 19 % reduction in total CHD mortality.

2, If you look at the absolute values, however, the mortality in the controls is 94/206 = 45.6 % but the diet group had reduced this to 79/206 = 38.3 % so the change in absolute risk is 45.6 % – 38.3 % or only 7.3 % which is less impressive but still not too bad.

3. So for every 206 people, we save 94-79 = 15 lives, or dividing 206/15 = 14 people needed to treat to save one life. (Usually abbreviated NNT). That doesn’t sound too bad. Not penicillin but could be beneficial. I think…

Smoke and mirrors.

It’s what comes next that is so distressing. Table 10 pools the two groups, the diet and the control group and now compares the effect of smoking: on the whole population, the ratio of CHD deaths in smokers vs non-smokers is 119/54 = 2.2 (magenta highlight) which is somewhat more impressive than the 1.19 effect we just saw. Now,

1. The absolute difference in risk is (119-54)/206 = 31.6 % which sounds like a meaningful number.

2. The number needed to treat is 206/64 = 3.17 or only about 3 people need to quit smoking to see one less death

In fact, in some sense, the Oslo Diet-Heart Study provides smoking-CHD risk as an example of a meaningful association that one can take seriously. If only such a significant change had actually been found for the diet effect.

So what do the authors make of this? Their conclusion is that “When combining data from both groups, a three-fold greater CHD mortality rate is demonstrable among the hypercholesterolemic, hypertensive smokers than among those in whom these factors were low or absent.” Clever but sneaky. The “hypercholesterolemic, hypertensive” part is irrelevant since you combined the groups. In other words, what started out as a diet study has become a “lifestyle study.” They might has well have said “When combining data from fish and birds a significant number of wings were evident.” Members of the jury are shaking their heads.

Logistic regression. What is it? Can it help?

So they have mixed up smoking and diet. Isn’t there a way to tell which was more important? Well, of course, there are several ways. By coincidence, while I was writing this post, April Smith posted on facebook, the following challenge “The first person to explain logistic regression to me wins admission to SUNY Downstate Medical School!” I won although I am already at Downstate. Logistic regression is, in fact, a statistical method that asks what the relative contribution of different inputs would have to be to fit the outcome and this could have been done but in this case, I would use my favorite statistical method, the Eyeball Test. Looking at the data in Tables 5 and 10 for CHD deaths, you can see immediately what’s going on. Smoking is a bigger risk than diet.

If you really want a number, we calculated relative risk above. Again, we found for mortality, CHD (diet)/ CHD (control) = 94/79 = 1.19. But what happens if you took up smoking: Figure 10 shows that your chance of dying of heart disease would be increased by 119/54 = 2.2 or more than twice the risk. Bottom line: you decided to add saturated fat to your diet, your risk would be 1.19 what it was before which might be a chance you could take faced with authentic Foie Gras.

Daniel Steinberg’s question:

“Here was a carefully conducted study reported in 1966 with a statistically significant reduction in reinfarction rate. Why did it not receive the attention it deserved?”

Well, it did. This is not the first critique. Uffe Ravnskov described how the confusion of smoking and diet led to a new Oslo Trial which reductions in both were specifically recommended and, again, outcomes made diet look bad [4]. Ravnskov gave it the attention it deserved. But what about researchers writing in the scientific literature. Why do they not give the study the attention it deserves. Why do they not point out its status as a classic case of a saturated fat risk study with no null hypothesis. It certainly deserves attention for its devious style. Of course, putting that in print would guarantee that your grant is never funded and your papers will be hard to publish. So, why do researchers not give the Oslo-Diet-Heart study the attention it deserves? Good question, Dan.

Bibliography

1. Steinberg D: The cholesterol wars : the skeptics vs. the preponderance of evidence, 1st edn. San Diego, Calif.: Academic Press; 2007.

2. Leren P: The Oslo diet-heart study. Eleven-year report. Circulation 1970, 42(5):935-942.

3. Howard BV, Van Horn L, Hsia J, Manson JE, Stefanick ML, Wassertheil-Smoller S, Kuller LH, LaCroix AZ, Langer RD, Lasser NL et al: Low-fat dietary pattern and risk of cardiovascular disease: the Women’s Health Initiative Randomized Controlled Dietary Modification Trial. JAMA 2006, 295(6):655-666.

4. Ravnskov U: The Cholesterol Myths: Exposing the Fallacy that Cholesterol and Saturated Fat Cause Heart Disease. Washington, DC: NewTrends Publishing, Inc.; 2000.

[…] Admissibility. IV. The Oslo Diet-Heart Study. August 29, 2011By: rdfeinman Read the Full Post at: Richard David Feinman “In the Viking era, they were already using skis…and over the centuries, the Norwegians […]

I have to say that I didn’t quite catch what was your message on smoking in the context of Oslo Diet-Heart. Was it a confounder, or intentionally introduced into the design after initiation of the study? I also gave some attention to Oslo Diet Heart (and Finnish Mental Hospital Study). See these slides if interested: http://www.slideshare.net/pronutritionist/oslo-dietheart-study

My message was that the effect of diet was minimal while the effect of smoking was real. By combining them, they give the false impressionthat there was an effect of diet. It may be, as you say, “they intentionally introduced into the design after initiation of the study” but they still could have separated out the two effects if they really wanted to communicate what was going on. In my opinion, this is not an outlier. It is another failure. I have to look at your slideshare carefully but it is important to remember that you cannot report just relative risk — you double your chances of winning the lottery by buying two tickets. In the Oslo study, there was a 19 % reduction in total CHD mortality but the change in absolute risk was 7.3 % which is very different.

Thanks for your reply. But isn’t the use of relative risk an “every day practice” in popularisation of medical and nutrition science? I understand some of its pitfalls, and would prefer use of absolute risk though.

Yes. The use of relative risk is every day practices. Other every day practices include:

1. Presenting your data grouped into quintiles instead of simply plotting individual values.

2. Presenting your data in large, complicated tables instead of simple graphs.

3. Treating progressive data (CVD events after 2, 5 and 10 years) as if they were trajectories (for every 1% reduction in x there will be a 10 % increase in CVD).

4. More generally, using group statistics on populations that you admit are heterogeneous.

5. Confusing “assignment to a diet” with “diet,” the standard sloppiness of intention-to-treat.

6. Citing studies, like the Oslo study, as if they were successes rather than what they actually were.

7. Referring to your experimental diet as “healthy” even if it does worse than the control.

8. Showing the error in your data as SEM rather than SD because it makes the data look better than it is.

These are all every day practices and, if you are the editor of a journal, it is everyday practice to

1. Publish a critique of low-carbohydrate diets by a junior faculty in a Medicine Department who has never put a patient on a low-carbohydrate (or any other) diet, who took one course in biochemistry his first year in medical school and who has never read a scientific paper on low-carbohydrate diets.

2. Publish papers that do not cite previous work.

These are all everyday practices but the great thing about nutrition is that every day there is a new and far-out practice, usually from Jenkins’s lab (“Eco-Atkins,” “Nuts as a Replacement for Carbohydrates…”)

More seriously, although heart disease is a big killer, if you look at 1000 men for 5 years, there will be a small number of events, so relative risk is not useful. There is, in any case, nothing wrong with it. The pitfall is in only using relative risk. Other measures, absolute risk, number-needed-to-treat, or something that gives good feel for the real effect must also be included if you use relative risk.

Thanks, I will pay more attention to the points you raised on your list.

It would be interesting to read your take on the hierarchy of evidence in nutrition science. I mean morbidity/mortality RCTs on the top of the ranking followed by prospective cohorts and surrogate marker RCTs. Or have you already done something like it?

I had, in fact, written about that in a post called “Evidence-based Medicine. Who decides Admissibility. II. Mozart and the Levels of Evidence.” ( http://wp.me/p16vK0-4K ) where I my take was that it did not make much sense. The idea that there is one type of experiment that is best for every question is not a good idea. The conclusion of that post was:

“So where do these arbitrary guidelines in EBM come from? They were set up by a medical community that is stereotyped as being untrained in science. I hate stereotypes, especially medical stereotypes since I think of myself as coming from a medical family (my father and oldest daughter are physicians) but stereotypes come from someplace…. In the end, it makes me think of the undoubtedly apocryphal story about Mozart.

A man comes to Mozart and wants to become a composer. Mozart says that they have to study theory for a couple of years, they should study orchestration and become proficient at the piano, and goes on like this. Finally, the man says “but you wrote your first symphony when you were 8 years old.” Mozart says “Yes, but I didn’t ask anybody.”

This hierarchy of evidence is not the work of scientists. I will discuss this again in the next post.

Great, I’m looking forward to your next post.

Looking at the actual numbers is indeed revelatory. If two scandinavian studies are the “elephant” that diet-heart hypothesis largely rests on, I think anyone equipped with critical thinking skills should be shocked. Or at least quite annoyed by the value, hype and policy change and impact that these studies have made worldwide.

I think the “crime” here is not sat fat per se, as nothing really makes it much more useful either, but the gargantuan wasting of resources and truckloads of money and closing of academic minds. As a sex researcher student, I’m all too familiar with this in social sciences. But fimding the same in “harder” sciences is really appalling.

Reijo (up here) is making a great public service in Finland by dissecting these key studies apart here and in mind the similar mindset of denial and silence prevails with many of the health professionals, even when the data is presented to the front of their eyes.

Oslo study’s diet group had less hypertensive men but control group had more men aged sixty or older. Also, men in control group were more overweight and the difference grew larger as the study went on. Diet group also had more fruit, vegetable and nut intake and FISH, so claiming this a “clean” dietary fat change study is hogwash. They were even provided large quantities of sardines (in cod liver oil) free of charge!

Comparing Oslo study to, say, Lyon Heart is far more appealing but of course In Lyon so many things were changed that nobody claims it to be fat control study per se.

Weak elephants indeed. And elephants keep coming and going. Relying on large, “random controlled trials” and epidemiologic studies ensures that the data is always so-so. The key point is that saturated fat is made out to be undeniably bad but we are still doing studies which mostly show no effect but, more important, when they do have an effect, they are so weak that they are completely out of character with the dire warnings.

And what is the alternative? In vitro studies? Belittling best existing evidence related to a very real & large public health issue in a fashion of a first-year med student (a la THINCS)?

Asked and answered. The alternative depends on the question to be asked. Sometimes, in vitro is best. Some of my best friends are first year medical students.

“Sometimes, in vitro is best.”

Sometimes yes. Or rather, for some purpose. But generally not when one’s trying to investigate e.g. the efficacy of a diet in preventing cv-disease. Here the best option is to rely on RCTs and epidemiological data.

“Some of my best friends are first year medical students.”

That’s very nice. Hopefully that doesn’t mean that some of your best friends are from THINCS …

Suggest you start a blog providing a rebuttal to my friends at THINCS.